Introduction

The air in Silicon Valley is once again filled with excitement, and this time the epicenter is OpenAI.

Is OpenAI’s singularity moment approaching?

Recently, a rumor went viral on X—

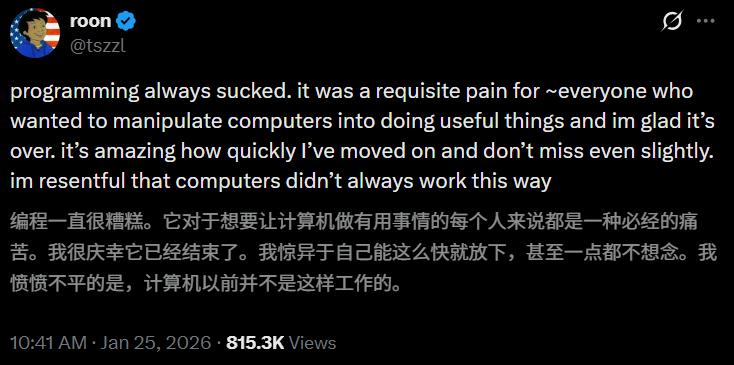

Codex has officially taken over 100% of the coding work from OpenAI researcher “Roon”!

Roon expressed a mix of emotions:

Roon expressed a mix of emotions:

Programming has always been painful, but it was a necessary path. I’m glad it’s finally over.

I’m surprised I was able to escape the shadow of programming so quickly, and I don’t miss it at all. I even regret that computers weren’t like this in the past.

Back in December, Boris Cherny, the father of Claude Code, dropped a bombshell—

Back in December, Boris Cherny, the father of Claude Code, dropped a bombshell—

My contributions to Claude Code were 100% completed by Claude Code.

This self-evolving phenomenon ignited a coding automation frenzy in Silicon Valley.

Faced with such a massive opportunity, OpenAI is clearly not going to sit idly by.

The counterattack has already begun.

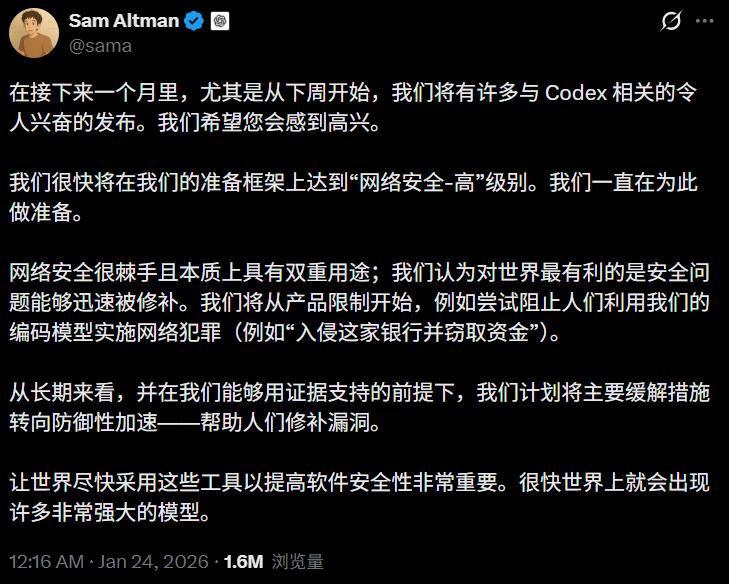

Just this past weekend, Sam Altman publicly announced that a series of new products based on the Codex coding model will be released in the coming month.

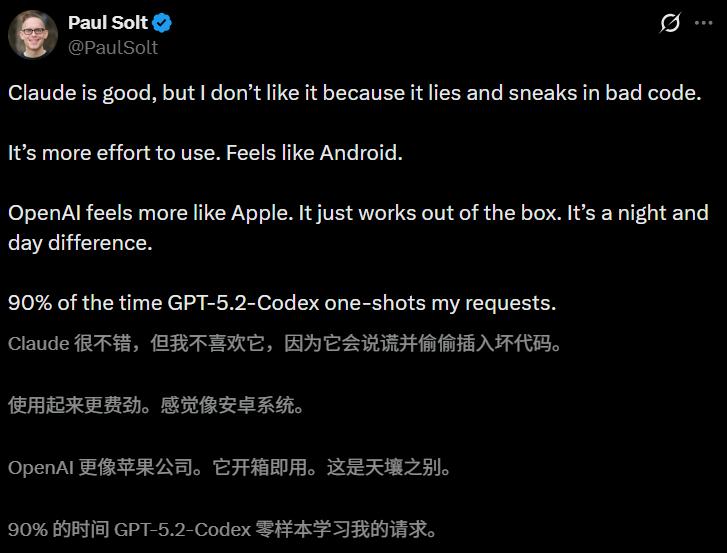

The community’s sentiment is also subtly shifting.

The community’s sentiment is also subtly shifting.

Some veteran developers commented that in 90% of cases, GPT-5.2-Codex can fulfill their requests in one go.

Claude is good, but it occasionally sneaks in “bad code”; in contrast, OpenAI’s new solution is more like Apple—emphasizing a plug-and-play experience.

It seems that the battle between Codex and Claude Code is about to erupt!

It seems that the battle between Codex and Claude Code is about to erupt!

Is the Era of Human Coding Over?

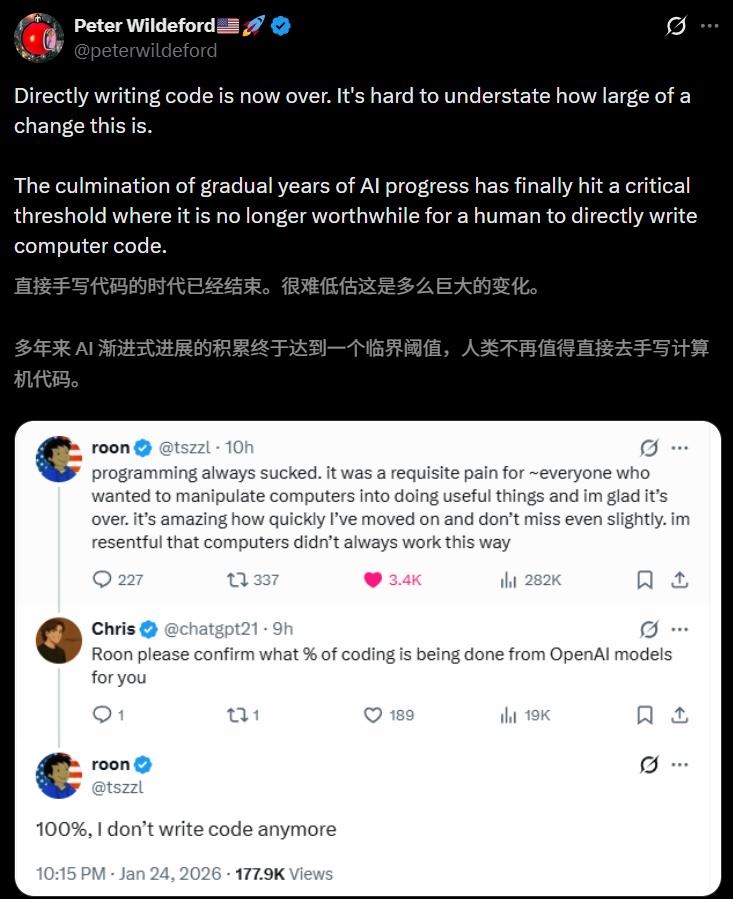

Roon’s revelation has led many netizens to declare: AI has finally reached this singularity!

It appears that the era of humans directly writing code is truly coming to an end.

After years of model iterations and data accumulation, we seem to be standing at a critical juncture:

Writing code by hand is becoming meaningless, even a waste of efficiency.

In Roon’s comment section, people began to collectively say goodbye to the programming era.

In Roon’s comment section, people began to collectively say goodbye to the programming era.

Yes, I love computers and software development; for me, programming is just a means to achieve goals, nothing more.

Complex syntax is merely the expensive price we pay to execute logic.

Now, these intermediaries can finally exit the stage.

Radical views began to emerge.

Radical views began to emerge.

Some even suggested that since humans no longer need to read code, we should let models skip human-readable assembly language and use machine code directly.

Today’s programming is like the punch cards of the past; it should disappear forever.

Meanwhile, another explosive piece of news leaked from within OpenAI—

Meanwhile, another explosive piece of news leaked from within OpenAI—

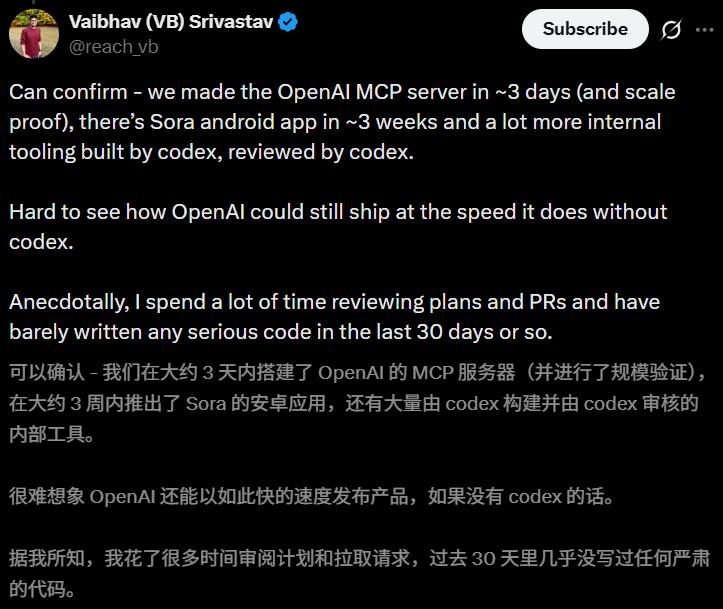

A researcher revealed that with the help of Codex, they built OpenAI’s MCP server from scratch in just three days and completed scalability validation.

Not only that, but they also launched the Sora Android app within three weeks; additionally, a wave of internal tools built and even self-audited by Codex is lined up for release.

Without Codex, it is hard to imagine OpenAI could release products at such an astonishing speed.

Interestingly, this big shot seems to have played on the words of Claude Code’s creator:

In the past 30 days, I spent a lot of time reviewing Plans and PRs, hardly writing a line of code!

Some commented that this is just the first phase of “takeoff.”

Some commented that this is just the first phase of “takeoff.”

The next step may be true end-to-end AI autonomous research.

Some questioned whether this was just marketing.

Some questioned whether this was just marketing.

The researcher explained in detail that it absolutely is not.

The specific usage process is as follows:

The specific usage process is as follows:

First, they spend a lot of time writing specifications and envisioning what the output should look like.

Then, they initiate a “4×Codex” cloud concurrent task. This allows them to see multiple different variants at once and fill in any details they initially overlooked.

Next, they let Codex do its thing. Once it finishes running, humans intervene for testing and validation.

Codex CLI 0.9+ is Here!

Since the paradigm of “human-machine collaboration” has changed, the tools that support this paradigm naturally need to be upgraded.

Facing the pressure from Anthropic, OpenAI is clearly prepared.

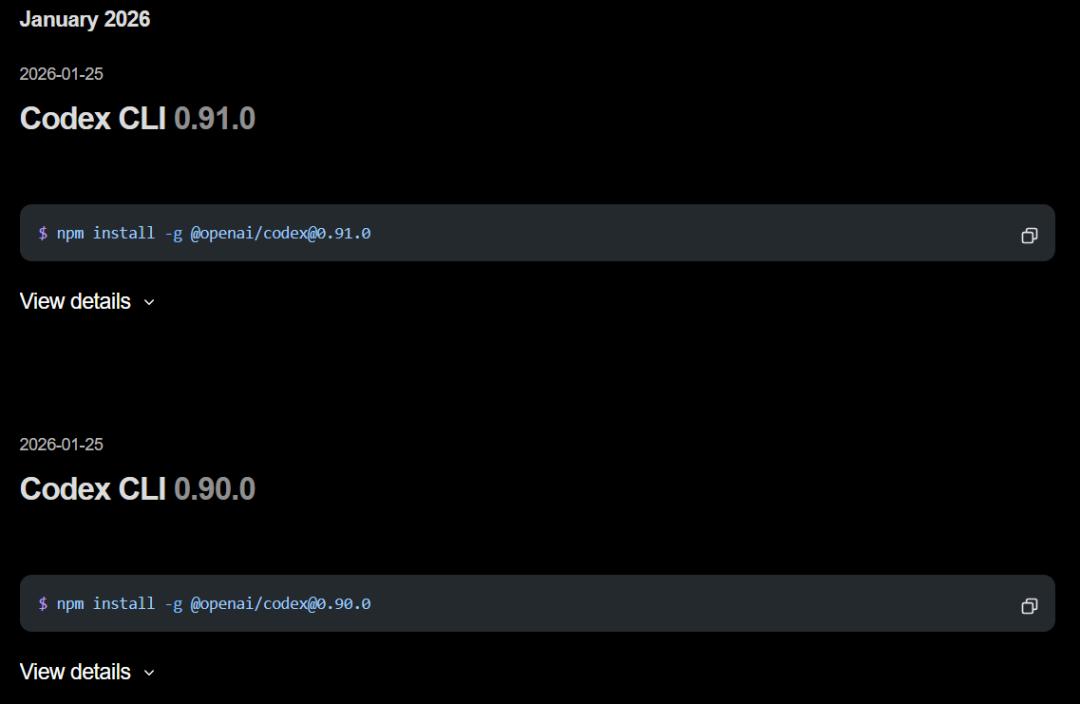

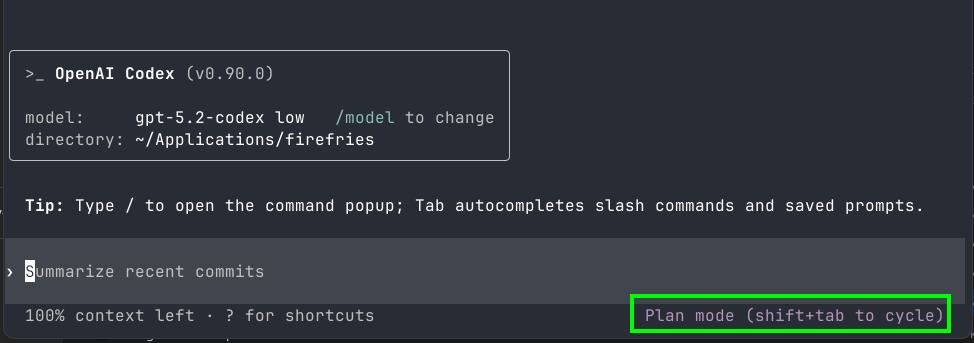

Today, Codex CLI pushed two updates consecutively, bringing the version number to 0.91.0.

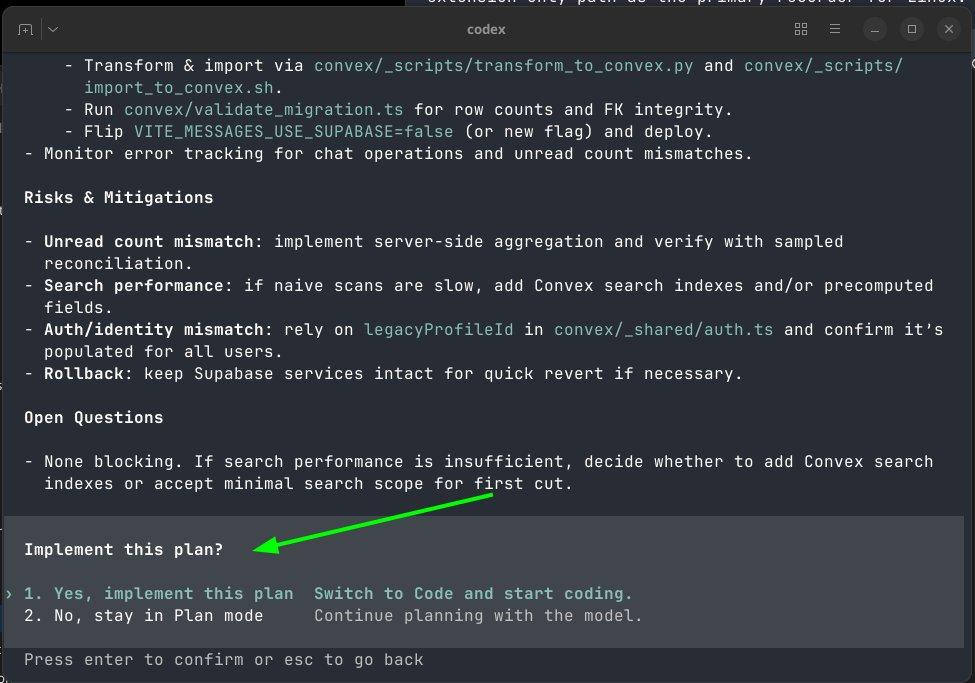

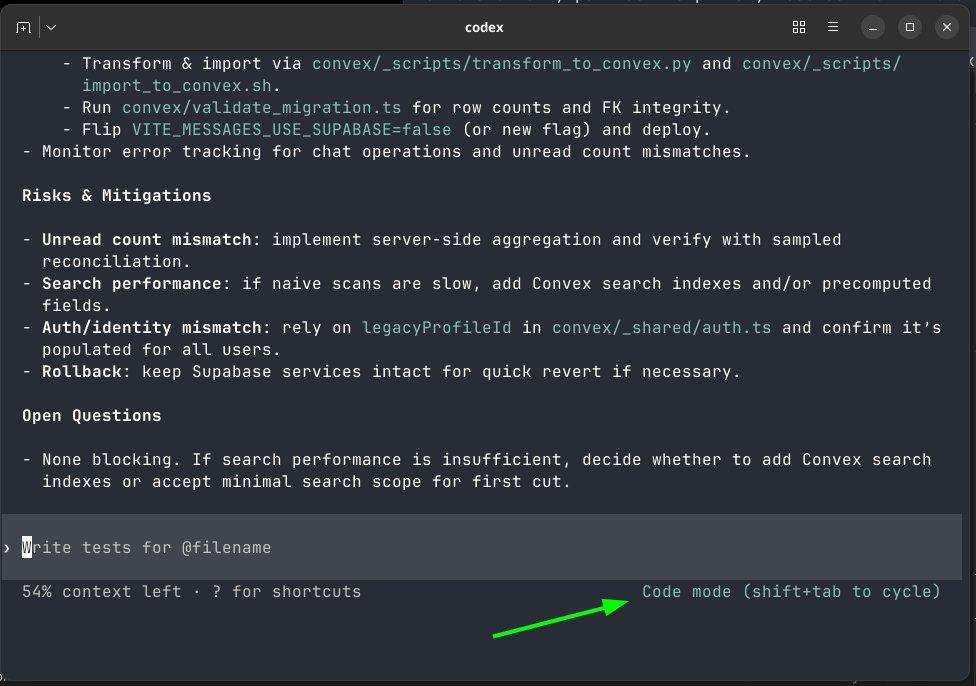

Among them, Codex 0.9.0 introduced the most anticipated feature—Plan Mode!

Among them, Codex 0.9.0 introduced the most anticipated feature—Plan Mode!

Code Mode is the default experience of Codex, and its operation is similar to other AI agents, which we won’t elaborate on here.

Code Mode is the default experience of Codex, and its operation is similar to other AI agents, which we won’t elaborate on here.

However, Plan Mode is completely different; it breaks down programming tasks into two distinct phases:

However, Plan Mode is completely different; it breaks down programming tasks into two distinct phases:

Phase One: Understanding Intent (clarifying goals, defining scope, identifying constraints, establishing acceptance criteria)

Phase Two: Technical Specifications (generating a comprehensive implementation plan)

In this mode, the output is very detailed and can be executed directly without any follow-up questions.

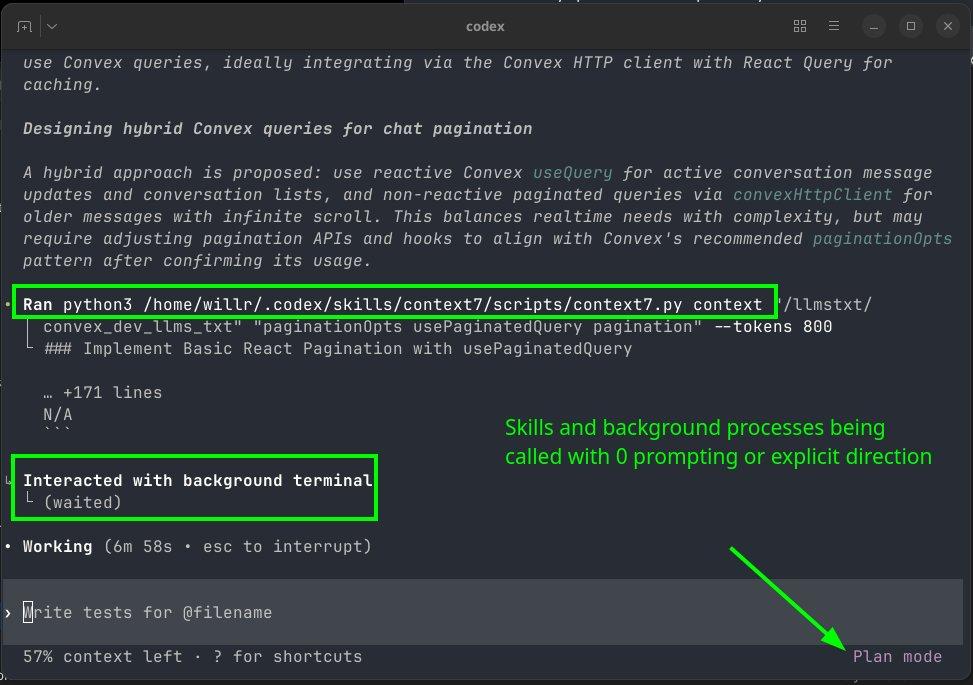

The smartest aspect of Plan Mode is that it adheres to “evidence-first exploration”.

The smartest aspect of Plan Mode is that it adheres to “evidence-first exploration”.

Before asking questions, Codex will conduct targeted searches in your codebase more than twice, checking configurations, schema structures, program entry points, etc.

Additionally, Plan Mode can call a full suite of tools:

It can (and will) invoke various skills, sub-agents, and backend terminals to construct high-level implementation plans.

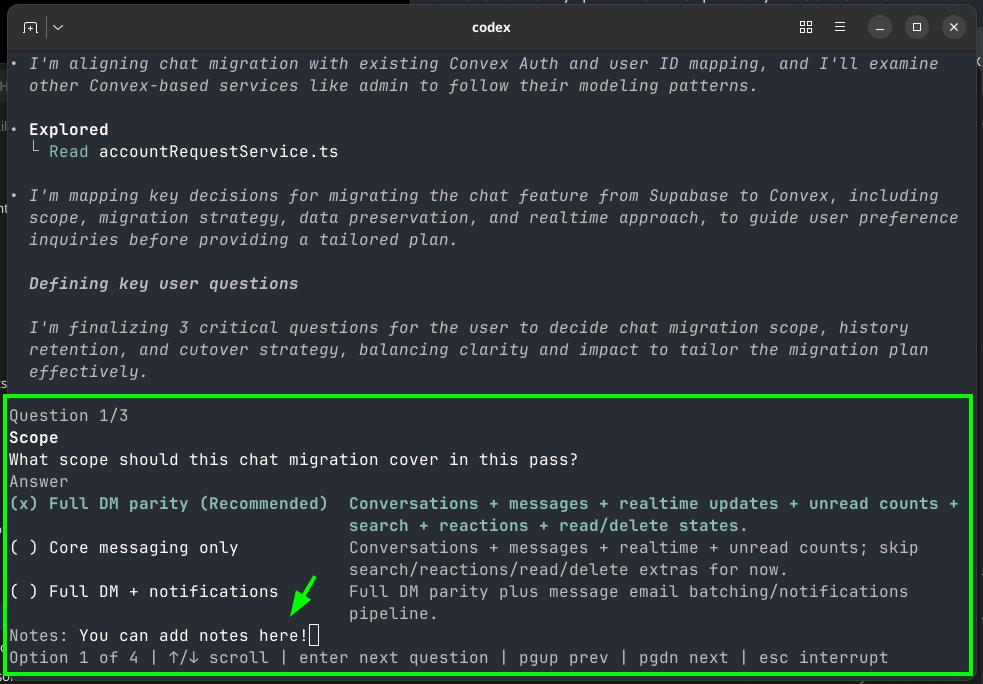

When Codex does need you to input something, it is structured and only asks key, focused questions:

When Codex does need you to input something, it is structured and only asks key, focused questions:

- Provide options whenever possible

- Always include a recommended option (very user-friendly for beginners)

- Only ask questions that will materially change the plan

To facilitate this interaction, it uses a new request_user_input tool.

This tool pauses the execution flow, throws out a targeted multiple-choice question, and allows you to provide feedback or context when making a selection.

More thoughtfully, if it detects any ambiguity at any time, especially when you are guiding it with vague instructions, it will immediately stop to confirm instead of blindly executing.

The development process now looks like this:

User requests a plan -> AI researches the codebase and planning -> Targeted inquiry to the user -> AI refines and completes the plan -> Prompt to execute?

But Who Reviews the Code?

It seems flawless, right? Codex thinks, Codex executes, Codex fills your GitHub.

But just as we celebrate this extreme efficiency, a neglected abyss is opening beneath us—

In this new era, the biggest suspense is no longer who writes the code, but who reviews the code.

As AI fires on all cylinders, throwing 10+ PRs into the repository daily, human developers are facing what is essentially a DDoS attack on their attention.

AI-generated code is at millisecond speed, while human understanding of code context takes minutes or even hours.

This “extreme asymmetry between production and review” brings two terrifying consequences:

- Reviewers are overwhelmed and begin to habitually click “Approve,” reducing Code Review to a formality.

- Code blocks that seem runnable but lack systematic thinking are spreading through the codebase like cancer cells.

The conflict of interest is evident, but we need to see through this layer.

The creators of Claude Code tout their tool as a given—this is the instinct of business.

But as the audience, we cannot take the “perfect world in the demo” as our daily reality.

After all, demos don’t showcase the race conditions that take three hours to debug, nor do they reveal logical gaps caused by loss of context.

Moreover, the data hides a captivating paradox.

Ars Technica reported that while the usage of AI tools among developers is rising, their trust in these tools is declining.

Why? Because AI is crossing the “uncanny valley.”

Previously, AI code was obviously poor; now, AI code is poorly hidden—it references non-existent libraries or buries issues in extremely edge cases.

The more people use it, the more pitfalls they encounter, leading to less trust.

As Jaana Dogan warned, we are facing the risk of “trivialization” in software engineering.

- 100 submissions may make GitHub’s green squares look good.

- 1 architectural change may require three days of thought with zero lines of code produced.

The former is as cheap as dust, while the latter is as precious as gold.

The question is never whether AI can write code, but whether the code it writes is what our system truly needs and whether we have the capacity to maintain it.

What Does This Mean for Us?

Whether we are ready or not, this era has arrived. For different groups, this means entirely different survival rules.

To Developers

AI coding tools are not “coming soon”; they have already burst through the door.

The question is how to integrate them without losing one’s core value.

Tech experts are still doing the hard thinking work; AI has merely taken over the role of the “typist.”

If you only know how to “move code,” then you should indeed be worried.

To Non-Developers

The boundary between “technical work” and “non-technical work” is dissolving.

Tools like Claude Cowork have created new species. Tasks that once required developers may soon only require you to clearly describe what you want.

The ability to clearly articulate requirements will become the new programming language.

Conclusion

Although OpenAI researchers and the creators of Claude Code claim that AI handles 100% of the code, remember—

That is their lab environment, not your production environment.

What is certain is that we are undergoing an irreversible transition from “writing code” to “commanding code to be written.”

And it is accelerating.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.