Introduction

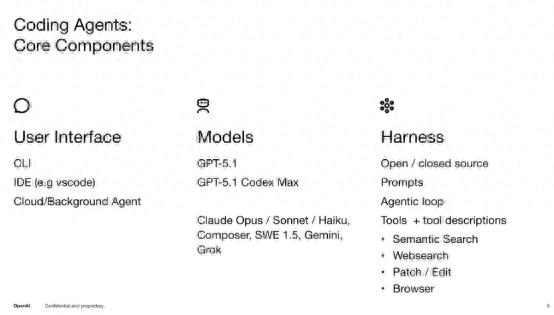

Earlier this year, OpenAI architects Bill Chen and Brian Fioca detailed the challenges overcome during the development of Codex and emerging usage patterns of the Coding Agent. The Coding Agent comprises three parts: the user interface, the model, and Harness.

The user interface can be a command-line tool, an integrated development environment (IDE), or a cloud-based agent. The model is straightforward, such as OpenAI’s GPT-5.1 series or models from other vendors. The Harness is a more complex component that directly interacts with the model. Simplistically, it can be viewed as a core agent loop made up of a series of prompts and tools that provide input and output for the model.

Harness serves as the interface layer for the model, facilitating interaction between the model, users, and code. It includes all the components necessary for the model to function in multi-turn dialogues, call tools, and ultimately write code while interpreting user needs. For some products, Harness may be a critical component.

Recently, Anthropic published a blog titled “Harness Design for Long-Running Application Development,” describing Harness as an external framework, control structure, and orchestration system that supports complex AI agents. It is not a single algorithm but a comprehensive engineering “scaffold” used to manage and amplify AI capabilities.

Harness represents a higher-level abstraction over Prompt Engineering. While prompts determine the quality of a single interaction, Harness dictates the execution flow and reliability of multi-turn, multi-agent, and long-duration tasks.

The core function of Harness is to address the “going off the rails” issue when AI completes complex, time-consuming tasks, compensating for inherent model flaws (such as context anxiety and self-aggrandizement).

Both OpenAI and Anthropic recognize Harness as critical for the deployment of Coding Agents, but they differ on whether to strengthen and thicken Harness or to streamline and lighten it.

Should Harness Expand or Contract?

There seems to be a growing consensus in the industry: the upper limit of AI programming is no longer determined by the model’s single-instance generation capabilities but by Harness Engineering.

Anthropic’s recent engineering articles showcase their deep exploration of Long-running Agents. To solve the “derailment” problem in long-duration tasks, they built a highly structured Harness:

- Structured Handoff: Forces AI to generate “progress reports” before context is exhausted, externalizing state.

- Multi-Agent Collaboration: Introduces roles such as Planner, Generator, and Evaluator for division of labor.

- Context Reset Mechanism: To avoid “context anxiety,” it clears dialogue history, retaining only structured outputs, giving new agents a “blank slate”.

This approach fundamentally advocates for “strengthening and thickening Harness.” They believe that a robust framework can support the most complex tasks.

However, OpenAI Codex’s open-source lead, Michael Bolin, recently signaled a contrary stance during an interview, suggesting that Harness should not expand indefinitely.

He articulated a significant trend observed in Codex’s design philosophy: ideally, Harness should be “as small as possible,” while the model should be “as powerful as possible”. The design philosophy of Codex aims to minimize tool dependencies, avoid excessive intervention, and allow the model to explore solutions in environments closer to real computation (like terminals). This “AGI-oriented” approach essentially reduces human-imposed constraints on the model, returning more decision-making power to the model itself. However, Michael emphasized that safety and sandboxing remain non-negotiable bottom lines and core responsibilities of Harness.

Codex’s philosophy leans towards “thinning and lightening Harness,” illustrated by the following points:

- Minimizing Tool Dependencies: Intentionally reducing specialized tools, allowing the model to use general terminals directly.

- Environment Over Framework: Harness only provides necessary sandbox safety and basic interfaces, avoiding excessive process control.

- Empowering the Model: Exploration, decision-making, and execution logic are primarily left for the model to learn, rather than hardcoded by external orchestration frameworks.

This perspective raises concerns that overly complex Harness could “dumb down” the model or create a heavy engineering burden, slowing down iteration speed.

The divergent paths of OpenAI and Anthropic present a crucial question for AI practitioners: Is Harness the ultimate goal of AI coding, or is it a rapidly expanding intermediate state?

The answer to this question will determine the future shape of products:

- If Harness is the ultimate goal: Future competition will be a “framework war.” Whoever possesses the most robust and versatile Harness (like Anthropic’s multi-agent architecture) will dominate the development process. AI programming will evolve into “system engineering + AI.”

- If Harness is an intermediate state: The current complex frameworks merely compensate for the existing model’s shortcomings. As model capabilities exponentially improve (e.g., stronger memory, longer context, better reasoning), these intricate external orchestrations will eventually be internalized by the model. At that point, Harness will devolve into a simple runtime environment (Sandbox), and core competitiveness will revert to the base model’s capabilities.

Michael Bolin is not a traditional “AI practitioner.” Before joining OpenAI, he worked for Google and Meta, contributing to developer tools and infrastructure, leading or participating in projects like Buck, Nuclide, and DotSlash.

Discussion on AI Coding and Harness Engineering

Host: Today, I’m pleased to welcome Michael Bolin, the head of Codex. People often think the core of AI coding is “the model writing code.” However, many teams building agents believe the real change lies in designing the environment around the model. Which do you resonate with more?

Michael: The model certainly dominates the overall experience. However, we find significant innovation potential at the Harness layer. This is not just a research issue. For our team, the key lies in the synergy between engineering and research—developing agents collaboratively to ensure Harness enables agents to perform at their best. We also need to provide suitable tools, ensuring that these tools have been “seen and practiced” by the model during training, so they won’t be “strange” or “error-prone” when invoked in real product environments.

Host: Let’s define Harness and why it has become so important.

Michael: Harness is sometimes referred to as the Agent loop—it calls the model, samples, and provides context: what I want to do, what tools are available, and what the next step should be. The model then returns a response—usually a tool call, such as “I want to call this tool with these parameters, please tell me the result.”

Some tools are simple, like running an executable and returning stdout and exit codes. We have also conducted many more complex tool experiments, such as controlling machines or user notebooks, functioning more like an interactive terminal rather than simple command execution. It can also perform web searches and other operations.

For Codex, being a coding agent, we emphasize safety and sandbox mechanisms. Therefore, one of the core tasks of Harness is to obtain shell commands or computer operation instructions from the model and ensure they execute in a sandbox or follow user-defined policies. This aspect is quite complex. The key is to unleash the model’s full capabilities while ensuring safe operation on user machines.

Host: When you open-sourced Codex, how did you handle security issues?

Michael: These implementations can actually be seen in our codebase. We handle different operating systems differently: on macOS, we use a technology called Seatbelt. On Linux, we utilize a series of libraries, including Bubblewrap, seccomp, and Landlock. On Windows, we built our own sandbox. Some components, like Seatbelt, are part of macOS, so they are not in the open-source codebase—we just refer to them that way. However, our Windows sandbox code is in the open-source codebase. We coordinate all these calls to ensure they pass through the sandbox appropriately to accommodate different tool calls.

Host: So when others fork Codex, do these security rules also get included?

Michael: Yes, but it’s important to distinguish between “security” and “safety.” What I mentioned earlier is more about security, such as being able to run tools but only accessing specific folders. Safety in the industry occurs more on the backend—whether the model itself will propose appropriate tool calls. From the Harness perspective, it’s more about executing commands, while which commands are safe is determined by the model.

So, if you fork Codex and continue using our model, you inherit this aspect of security. But if you switch to another model, that may not be the case.

How Codex Has Developed

Host: Since you launched Codex, how has its development progressed?

Michael: The response has been very positive, with usage increasing about fivefold compared to earlier this year. We launched it in April 2025 as part of the o3 and o4 mini releases, at which point the model’s tool calling and instruction execution were not ideal. By August, with the release of GPT-5, we updated the CLI, marking a critical turning point. Subsequently, we launched the VS Code plugin, which saw rapid user growth, even surpassing the CLI. Later, the applications released earlier this year also became very popular. I believe it has been genuinely pioneering in many aspects.

Host: In your view, what are the innovative aspects of this application?

Michael: Developers have historically spent most of their time in integrated development environments (IDEs). These are obvious and logical choices.

Developers typically work in IDEs, so it makes sense for us to enter VS Code, JetBrains, and Xcode. With the Codex application, we essentially established a new interface. I view it as a “task control center” that can manage multiple dialogues simultaneously. It retains core IDE capabilities, such as viewing diffs and using the Command-J shortcut to open a terminal without switching windows. It truly breaks the notion that you must always have all code in front of you. For many, the ability to organize and collaborate with multiple agents simultaneously is more valuable. This is the core functionality we strive to achieve.

How Coding Agents Change Developer Workflows

Host: How will coding agents like Codex change developers’ daily work?

Michael: The biggest change is throughput. You can parallelize many tasks. Of course, this brings some context switching, which not everyone enjoys, but if mastered, efficiency can be very high.

I personally maintain about five copies of Codex repositories, frequently switching between them. Sometimes, I notice minor issues while doing other things and quickly fix them. Other times, I spend an entire day addressing a significant change in Codex during meetings. Many people send a message even during a five-minute meeting break just to push a task in another direction.

The second point is that people are spending more time figuring out how to optimize this workflow. Relatively speaking, everything is very novel. Should I turn what I’ve been doing into a reusable skill? Should I share this skill with my team members? Great developers always strive to optimize their inner loops, but this is a brand new inner loop, and everyone is still exploring.

The third noteworthy aspect is code reviews. The number of code reviews has significantly increased, but Codex itself also handles a lot of code review work, saving a lot of time. How to maximize the use of these resources remains an ongoing exploration.

Host: Did you encounter any unexpected challenges during the initial development of Codex?

Michael Bolin: My biggest realization is that technology is evolving too quickly. Codex has been around for less than a year, and considering the immense changes during this time is truly astonishing.

When we launched in April 2025, it was part of the o3 and o4 release plans. At that time, we were using a reasoning model, but tool calling and instruction execution had not met our expectations. It has been gratifying to see improvements in this area over time.

One of the most exciting early experiences was witnessing Codex write more code on its own—seeing this process firsthand. For instance, agents.md gradually became a standard, establishing a framework that allows you to build tools that optimize your workflow. This brings an exponential leap, both exciting and enjoyable. Seeing colleagues genuinely understand Codex and shift more work to it has been fantastic.

What Should Codebases Look Like When Read by Agents?

Host: When codebases are read by agents rather than humans, what should they look like?

Michael: An interesting phenomenon during the entire agent coding journey is that some practices long considered best practices in software development have never truly been practiced. Documentation is one example, and test-driven development is another. People do not completely ignore them, but they often feel the cost outweighs the benefits. Now, in an agent-first world, these practices have become highly valuable. People are almost rediscovering them and genuinely valuing them.

For example, consider the agents.md file and all the content we write in it; I believe it applies equally to new team members—they need to know everything, all best practices. Writing this down benefits both agents and your teammates, which is a relief.

In other words, on Codex, we believe we have embraced the concept of general artificial intelligence (AGI)—meaning agents should truly decide what to do autonomously, rather than us constantly feeding them instructions. Instead of writing a document that runs in parallel with the source code, leading to potential duplication or inconsistencies, we prefer to let agents spend time reading code and forming their own judgments. We will try to add some information to the agents.md file that they cannot quickly glean from the code, such as how to run tests or which tests are more important. However, we aim to avoid excessive intervention, allowing agents to determine the best execution path themselves.

Host: Do you think agents.md will eventually be written by the agents themselves?

Michael: Many people are already doing this, such as including instructions like “update agents.md after completion”. Our team does not enforce this, but it is a common practice.

Many developers include a request like this in their prompts: after completing a task, update the agents.md file with noteworthy information from the process—including less obvious insights or experiences gradually discovered while collaborating with Codex.

However, this has not yet become a universal norm within our team. If you look at the history of our codebase, you will find that we have not systematically done this, but it has become relatively common in the community.

Additionally, academia is beginning to discuss how much information should be appropriately provided to agents. Personally, I believe this largely depends on the specific capabilities of the agent.

In Codex’s practice, we adopt a relatively restrained approach—not writing dozens of pages of detailed instructions but retaining key points for the agent to understand and leverage.

Codex Does Not Generate “Garbage”

Host: Context Engineering seems to be an increasingly important part of this process. For agents, could there be a problem of “too much context”?

Michael: From my experience rather than a research perspective: for medium-sized tasks, I usually describe a piece of code and let Codex familiarize itself with it. Sometimes, if I think it helps, I provide explicit file pointers, but usually, I do not—Codex can search the codebase well on its own.

One easily overlooked yet crucial aspect is ensuring proper naming conventions for files and folders. This is a good habit, and it becomes even more important when the agent program searches the code.

Most context information will come from the agents.md file, the prompts I write, and some file references. I also grant Codex access to GitHub so it can see information like: for example, similar issues appearing in this pull request, allowing it to view not only the code but also discussions around that pull request. But again, this is more about providing Codex with choices—like giving it the tools in a toolbox—rather than dictating how it should solve problems. This is a good model, and it performs well in this regard.

Host: It sounds like this way of working will encourage you to adopt a stricter architecture. Is that the case?

Michael: Certainly. Codex will follow the patterns it discovers in the codebase. If you start with a good architecture, it will adhere to it, maintain it, and enforce the invariants you set. In the long run, you will be in a favorable position. Of course, this applies to human developers as well. The pace of change is much faster now, so having these standards allows you to feel the benefits they bring more profoundly.

Host: Do you still see many flaws in the models and coding agents? How do you address them?

Michael: Honestly, I don’t think there’s anything that can be termed “bad” in Codex. What I see more often is that these models enjoy writing code. So sometimes the right approach is to delete code; you might need to be more explicit about that. But this doesn’t really count as bad—it’s more like: you added 500 lines of code in this file; maybe you should create a new file. These are easier to resolve.

More commonly, Codex has mastered idioms or language features I have yet to encounter and applies them. This is how Codex surprises me more—not by being perfunctory.

The Importance of Models vs. Harness Engineering

Host: You just described how, when Codex started, the model was not yet mature. Now the model has matured significantly, and the application has attracted a broader user base. But I want to ask, which is more powerful: the model or Harness Engineering? Will Harness Engineering eventually become more than just a packaging layer and become a more important environment? Or will the model always remain dominant? In your view, which is more important: the model or Harness Engineering?

Michael Bolin: I understand your point; you’re asking whether it’s possible for Harness Engineering to gradually disappear and no longer play a significant role?

I don’t think that’s impossible. In many ways, we are working to keep Harness as small and lightweight as possible. One notable characteristic of Codex, compared to other agents, is our effort to minimize the tools available to agents. For instance, Codex has very few tools and does not have a dedicated file-reading tool; instead, it uses terminal commands. This aligns with the “AGI philosophy” I mentioned earlier: we give it a broad exploration space to find the best operational path.

The only exception is safety—the sandbox is essential. The sandbox mechanism is a crucial safeguard against Codex running out of control. Sometimes, people try to be clever by controlling the agent to manipulate the context window. But as the authors of Codex, we want to say: “Put away your cleverness; I know better than you.” However, we try to exercise restraint. If Codex is about to run a tool that outputs 1GB of data, our idea is to let Codex write the data to a file first and then use the grep command to search, but allowing it the freedom to choose how to solve the problem.

Host: Do you think it’s possible to encode all these security rules and sandbox mechanisms? Or should there always be human involvement?

Michael: For the coding tasks we focus on, I believe the sandbox mechanism is indeed the primary method to replace human intervention, at least for most of our work. You encounter a problem, hand it to Codex, and it will operate within a sandbox environment constrained in a specific way, allowing it to explore and find the best solution—especially in large-scale applications. I run five clones of Codex simultaneously. If I had to intervene every few minutes with these five versions, it would fundamentally limit their throughput.

These corrective measures should be implemented more during the training phase and then function during the reasoning phase, rather than requiring human intervention.

Host: So, capabilities will increasingly reside in the model rather than in Harness?

Michael Bolin: Yes, the model is more important. However, the reliability of Harness remains crucial. If Harness fails, everything ends. As we inevitably move towards multi-agent and sub-agent architectures—where more agents communicate across different machines—Harness will no longer just be a single process on one machine but will become a network of agents. I anticipate a lot of interesting work ahead. Most of my career has been spent writing tools for developers; now I’m writing more tools for agents. Agents can also write their own tools, but as I said, we prefer to use a small number of powerful tools that allow agents to explore various possibilities fully—we will continue to experiment to find the best tool combinations.

Future Directions for Agents

Host: What do you think are the foundational components of agent coding?

Michael: I believe we have seen many components. For example, what I refer to as shell tools or terminal tools enables the model to use a computer terminal like a human rather than just executing commands directly. It also includes handling streaming output and efficiently utilizing that output.

Memory is another important area. In the past, every conversation started from scratch—this is why there are agents.md and various context-filling mechanisms to quickly import information into the model. If you look at the codebase, you will find many experiments regarding memory.

Additionally, various types of context connectors are undergoing many changes. Initially, we focused on computer tasks on local machines, but now it also encompasses broader work—such as sending emails on your behalf, creating documents, and performing actions in web browsers.

Moreover, there is the standard LLM infrastructure: generally, a larger context window is beneficial; how to compress content when reaching limits; all these are actively explored and contribute to enhancing the overall agent experience.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.